—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

News

Manufacturing Sector Reeling From Financial Costs of Ransomware

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

S3 Ep146: Tell us about that breach! (If you want to.)

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

The Need for Trustworthy AI

If you ask Alexa, Amazon’s voice assistant AI system, whether Amazon is a monopoly, it responds by saying it doesn’t know. It doesn’t take much to make it lambaste the other tech giants, but it’s silent about its own corporate parent’s misdeeds.

When Alexa responds in this way, it’s obvious that it is putting its developer’s interests ahead of yours. Usually, though, it’s not so obvious whom an AI system is serving. To avoid being exploited by these systems, people will need to learn to approach AI skeptically. That means deliberately constructing the input you give it and thinking critically about its output.

Newer generations of AI models, with their more sophisticated and less rote responses, are making it harder to tell who benefits when they speak. Internet companies’ manipulating what you see to serve their own interests is nothing new. Google’s search results and your Facebook feed are filled with paid entries. Facebook, TikTok and others manipulate your feeds to maximize the time you spend on the platform, which means more ad views, over your well-being.

What distinguishes AI systems from these other internet services is how interactive they are, and how these interactions will increasingly become like relationships. It doesn’t take much extrapolation from today’s technologies to envision AIs that will plan trips for you, negotiate on your behalf or act as therapists and life coaches.

They are likely to be with you 24/7, know you intimately, and be able to anticipate your needs. This kind of conversational interface to the vast network of services and resources on the web is within the capabilities of existing generative AIs like ChatGPT. They are on track to become personalized digital assistants.

As a security expert and data scientist, we believe that people who come to rely on these AIs will have to trust them implicitly to navigate daily life. That means they will need to be sure the AIs aren’t secretly working for someone else. Across the internet, devices and services that seem to work for you already secretly work against you. Smart TVs spy on you. Phone apps collect and sell your data. Many apps and websites manipulate you through dark patterns, design elements that deliberately mislead, coerce or deceive website visitors. This is surveillance capitalism, and AI is shaping up to be part of it.

Quite possibly, it could be much worse with AI. For that AI digital assistant to be truly useful, it will have to really know you. Better than your phone knows you. Better than Google search knows you. Better, perhaps, than your close friends, intimate partners and therapist know you.

You have no reason to trust today’s leading generative AI tools. Leave aside the hallucinations, the made-up “facts” that GPT and other large language models produce. We expect those will be largely cleaned up as the technology improves over the next few years.

But you don’t know how the AIs are configured: how they’ve been trained, what information they’ve been given, and what instructions they’ve been commanded to follow. For example, researchers uncovered the secret rules that govern the Microsoft Bing chatbot’s behavior. They’re largely benign but can change at any time.

Many of these AIs are created and trained at enormous expense by some of the largest tech monopolies. They’re being offered to people to use free of charge, or at very low cost. These companies will need to monetize them somehow. And, as with the rest of the internet, that somehow is likely to include surveillance and manipulation.

Imagine asking your chatbot to plan your next vacation. Did it choose a particular airline or hotel chain or restaurant because it was the best for you or because its maker got a kickback from the businesses? As with paid results in Google search, newsfeed ads on Facebook and paid placements on Amazon queries, these paid influences are likely to get more surreptitious over time.

If you’re asking your chatbot for political information, are the results skewed by the politics of the corporation that owns the chatbot? Or the candidate who paid it the most money? Or even the views of the demographic of the people whose data was used in training the model? Is your AI agent secretly a double agent? Right now, there is no way to know.

We believe that people should expect more from the technology and that tech companies and AIs can become more trustworthy. The European Union’s proposed AI Act takes some important steps, requiring transparency about the data used to train AI models, mitigation for potential bias, disclosure of foreseeable risks and reporting on industry standard tests.

Most existing AIs fail to comply with this emerging European mandate, and, despite recent prodding from Senate Majority Leader Chuck Schumer, the US is far behind on such regulation.

The AIs of the future should be trustworthy. Unless and until the government delivers robust consumer protections for AI products, people will be on their own to guess at the potential risks and biases of AI, and to mitigate their worst effects on people’s experiences with them.

So when you get a travel recommendation or political information from an AI tool, approach it with the same skeptical eye you would a billboard ad or a campaign volunteer. For all its technological wizardry, the AI tool may be little more than the same.

This essay was written with Nathan Sanders, and previously appeared on The Conversation.

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

The Season of Back to School Scams

Authored by: Lakshya Mathur and Yashvi Shah

As the Back-to-School season approaches, scammers are taking advantage of the opportunity to deceive parents and students with various scams. With the increasing popularity of online shopping and digital technology, people are more inclined to make purchases online. Scammers have adapted to this trend and are now using social engineering tactics, such as offering high discounts, free school kits, online lectures, and scholarships, to entice unsuspecting individuals into falling for their schemes.

McAfee Labs has found the following PDFs targeting back-to-school trends. This blog is a reminder for parents on what to educate their children on and how not to fall victim to such fraud.

Fake captcha PDFs campaign

McAfee Labs encountered a PDF file campaign featuring a fake CAPTCHA on its first page, to verify human interaction. The second page contained substantial content on back-to-school advice for parents and students, giving the appearance of a legitimate document. These tactics were employed to make the PDF seem authentic, entice consumers to click on the fake CAPTCHA link, and evade detection.

Figure 1 – Fake CAPTCHA and scammy link

Figure 2 – PDF Second Page

Figure 3 – Zoomed in content from Figure 2

As shown in Figure 1, there is a fake captcha image that, when clicked, redirects to a URL displayed at the bottom left of the figure. This URL has a Russian domain and goes through multiple redirections before reaching its destination. The scam URL contains the text “all hallows prep school uniform,” and leads to a malicious site that sets cookies, monitors user behavior, and collects interactions, sending the data to servers owned by the domain’s operators.

Figures 2 and 3 display the second page of the PDF, designed to appear legitimate to users and spam and security scanners.

In this campaign, we identified a total of 13 domains, with 11 being of Russian origin and 2 from South Africa. You can find the complete list of these domains in the final IOC (Indicators of Compromise) section.

All domains were created between 2020 and 2021 and use Cloudflare’s name servers.

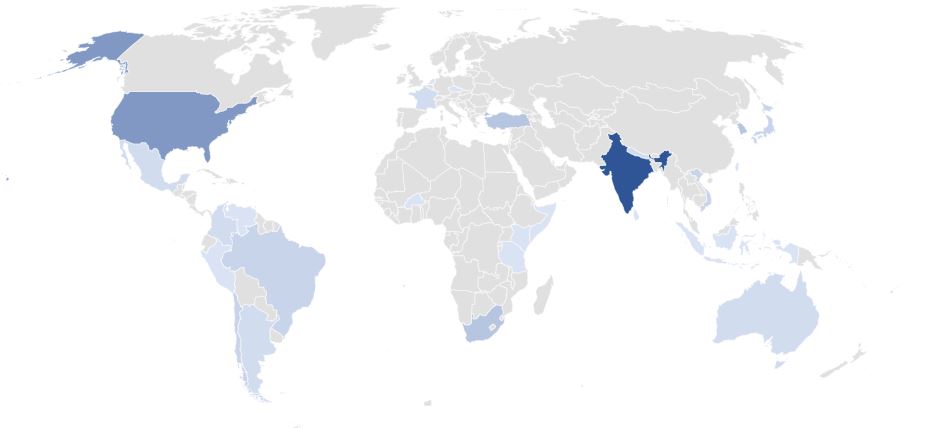

Geographical Distribution

These domains were discovered operating worldwide, targeting consumers across various countries. The United States and India stood out as the top countries where users were most often targeted.

Figure 4 – Geographical distribution of all the scam domains

What more to expect?

As the season begins, the scenario is only the beginning of back-to-school scam season. Parents and students should remain vigilant against fraud, such as:

- Shopping scams: During back-to-school season, scammers employ various tactics: setting up fake online stores offering discounted school supplies, uniforms, and gadgets, but delivering substandard or nonexistent products; spreading fraudulent social media ads with enticing deals that lead to fake websites collecting personal information and payment details; and sending fake package delivery emails, tricking recipients into clicking on malicious links to perform phishing and malware attacks.

- Tax/Loan free scams: Scammers target students and parents with student loan forgiveness scams, offering false debt reduction programs in exchange for upfront payments or personal information. They also entice victims with fake scholarships or grants, prompting fees or sensitive data, while no genuine assistance exists. Unsolicited calls from scammers posing as government agencies or loan providers add to the deception, using high-pressure tactics to extract personal information or immediate payments.

- Identity theft: Scammers employ various identity theft tactics to exploit students and parents: attempting unauthorized access to school databases for personal information, creating fake enrollment forms to collect sensitive data, and sending phishing emails posing as educational institutions or retailers to trick victims into sharing personal information or login credentials.

- Deepfake AI Voice scams: Scammers might use deepfake AI technology to create convincing voice recordings of school administrators, teachers, or students. They can pose as school officials to deceive parents into making urgent payments or sharing personal information. Additionally, scammers might mimic students’ or teachers’ voices to solicit fraudulent fundraisers for fake school programs or claim that students have won scholarships or prizes to trick them into paying fees or revealing sensitive information. These scams exploit the trust and urgency surrounding back-to-school activities.

How to Stay Protected?

- Be skeptical, if something appears to be too good to be true, it probably is.

- Exercise caution when registering or sharing personal information on questionable sites.

- Stay informed about these scams to safeguard yourself

- Maintain a skeptical approach towards unsolicited calls and emails.

- Keep your anti-virus and web protection up to date and perform regular full scans on your devices.

IOC (Indicator of Compromise)

| Filetype/URL | Value |

| 474987c34461cb4bd05b81d040cae468ca5b88e891da4d944191aa819a86ff21 | |

| 426ad19eb929d0214254340f3809648cfb0ee612c8374748687f5c119ab1a238 | |

| 5cb6ecc4af42075fa822d2888c82feb2053e67f77b3a6a9db6501e5003694aba | |

| Domain | traffine[.]ru |

| leonvi[.]ru | |

| trafffi[.]ru | |

| norin[.]co[.]za | |

| gettraff[.]ru | |

| cctraff[.]ru | |

| luzas.yubit[.]co[.]za | |

| ketchas[.]ru | |

| maypoin[.]ru | |

| getpdf.pw | |

| traffset[.]ru | |

| jottigo[.]ru | |

| trafffe[.]ru |

The post The Season of Back to School Scams appeared first on McAfee Blog.

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

The Wild West of AI: Do Any AI Laws Exist?

Are we in the Wild West? Scrolling through your social media feed and breaking news sites, all the mentions of artificial intelligence and its uses makes the online world feel like a lawless free-for-all.

While your school or workplace may have rules against using AI for projects, as of yet there are very few laws regulating the usage of mainstream AI content generation tools. As the technology advances are laws likely to follow? Let’s explore.

What AI Laws Exist?

As of August 2023, there are no specific laws in the United States governing the general public’s usage of AI. For example, there are no explicit laws banning someone from making a deepfake of a celebrity and posting it online. However, if a judge could construe the deepfake as defamation or if it was used as part of a scam, it could land the creator in hot water.

The White House issued a draft of an artificial intelligence bill of rights that outlines best practices for AI technologists. This document isn’t a law, though. It’s more of a list of suggestions to urge developers to make AI unbiased, as accurate as possible, and to not completely rely on AI when a human could perform the same job equally well.1 The European Union is in the process of drafting the EU AI Act. Similar to the American AI Bill of Rights, the EU’s act is mostly directed toward the developers responsible for calibrating and advancing AI technology.2

China is one country that has formal regulations on the use of AI, specifically deepfake. A new law states that a person must give express consent to allow their faces to be used in a deepfake. Additionally, the law bans citizens from using deepfake to create fake news reports or content that could negatively affect the economy, national security, or China’s reputation. Finally, all deepfakes must include a disclaimer announcing that the content was synthesized.3

Should AI Laws Exist in the Future?

As scammers, edgy content creators, and money-conscious executives push the envelope with deepfake, AI art, and text-generation tools, laws governing AI use may be key to stopping the spread of fake news and protect people’s livelihoods and reputations.

Deepfake challenges the notion that “seeing is believing.” Fake news reports can be dangerous to society when they encourage unrest or spark widespread outrage. Without treading upon the freedom of speech, is there a way for the U.S. and other countries to regulate deepfake creators with intentions of spreading dissent? China’s mandate that deepfake content must include a disclaimer could be a good starting point.

The Writers Guild of America (WGA) and Screen Actors Guild – American Federation of Television and Radio Artists (SAG-AFTRA) strikes are a prime example of how unbridled AI use could turn invasive and impact people’s jobs. In their new contract negotiations, each union included an AI clause. For the writers, they’re asking that AI-generated text not be allowed to write “literary material.” Actors are arguing against the widespread use of AI-replication of actors’ voices and faces. While deepfake could save (already rich) studios millions, these types of recreations could put actors out of work and would allow the studio to use the actors’ likeness in perpetuity without the actors’ consent.4 Future laws around the use of AI will likely include clauses about consent and assuming the risk text generators introduce to any project.

Use AI Responsibly

In the meantime, while the world awaits more guidelines and firm regulations governing mainstream AI tools, the best way you can interact with AI in daily life is to do so responsibly and in moderation. This includes using your best judgement and always being transparent when AI was involved in a project.

Overall, whether in your professional and personal life, it’s best to view AI as a partner, not as a replacement.

1The White House, “Blueprint for an AI Bill of Rights: Making Automated Systems Work for the American People”

2European Parliament, “EU AI Act: first regulation on artificial intelligence”

3CNBC, “China is about to get tougher on deepfakes in an unprecedented way. Here’s what the rules mean”

4NBC News, “Actors vs. AI: Strike brings focus to emerging use of advanced tech”

The post The Wild West of AI: Do Any AI Laws Exist? appeared first on McAfee Blog.

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

How Malicious Android Apps Slip Into Disguise

Researchers say mobile malware purveyors have been abusing a bug in the Google Android platform that lets them sneak malicious code into mobile apps and evade security scanning tools. Google says it has updated its app malware detection mechanisms in response to the new research.

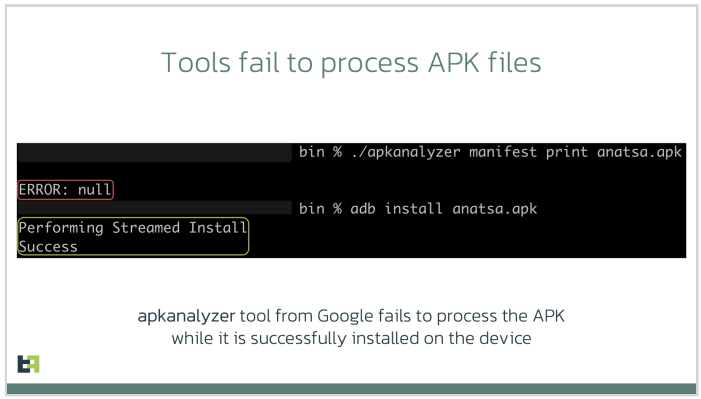

At issue is a mobile malware obfuscation method identified by researchers at ThreatFabric, a security firm based in Amsterdam. Aleksandr Eremin, a senior malware analyst at the company, told KrebsOnSecurity they recently encountered a number of mobile banking trojans abusing a bug present in all Android OS versions that involves corrupting components of an app so that its new evil bits will be ignored as invalid by popular mobile security scanning tools, while the app as a whole gets accepted as valid by Android OS and successfully installed.

“There is malware that is patching the .apk file [the app installation file], so that the platform is still treating it as valid and runs all the malicious actions it’s designed to do, while at the same time a lot of tools designed to unpack and decompile these apps fail to process the code,” Eremin explained.

Eremin said ThreatFabric has seen this malware obfuscation method used a few times in the past, but in April 2023 it started finding many more variants of known mobile malware families leveraging it for stealth. The company has since attributed this increase to a semi-automated malware-as-a-service offering in the cybercrime underground that will obfuscate or “crypt” malicious mobile apps for a fee.

Eremin said Google flagged their initial May 9, 2023 report as “high” severity. More recently, Google awarded them a $5,000 bug bounty, even though it did not technically classify their finding as a security vulnerability.

“This was a unique situation in which the reported issue was not classified as a vulnerability and did not impact the Android Open Source Project (AOSP), but did result in an update to our malware detection mechanisms for apps that might try to abuse this issue,” Google said in a written statement.

Google also acknowledged that some of the tools it makes available to developers — including APK Analyzer — currently fail to parse such malicious applications and treat them as invalid, while still allowing them to be installed on user devices.

“We are investigating possible fixes for developer tools and plan to update our documentation accordingly,” Google’s statement continued.

Image: ThreatFabric.

According to ThreatFabric, there are a few telltale signs that app analyzers can look for that may indicate a malicious app is abusing the weakness to masquerade as benign. For starters, they found that apps modified in this way have Android Manifest files that contain newer timestamps than the rest of the files in the software package.

More critically, the Manifest file itself will be changed so that the number of “strings” — plain text in the code, such as comments — specified as present in the app does match the actual number of strings in the software.

One of the mobile malware families known to be abusing this obfuscation method has been dubbed Anatsa, which is a sophisticated Android-based banking trojan that typically is disguised as a harmless application for managing files. Last month, ThreatFabric detailed how the crooks behind Anatsa will purchase older, abandoned file managing apps, or create their own and let the apps build up a considerable user base before updating them with malicious components.

ThreatFabric says Anatsa poses as PDF viewers and other file managing applications because these types of apps already have advanced permissions to remove or modify other files on the host device. The company estimates the people behind Anatsa have delivered more than 30,000 installations of their banking trojan via ongoing Google Play Store malware campaigns.

Google has come under fire in recent months for failing to more proactively police its Play Store for malicious apps, or for once-legitimate applications that later go rogue. This May 2023 story from Ars Technica about a formerly benign screen recording app that turned malicious after garnering 50,000 users notes that Google doesn’t comment when malware is discovered on its platform, beyond thanking the outside researchers who found it and saying the company removes malware as soon as it learns of it.

“The company has never explained what causes its own researchers and automated scanning process to miss malicious apps discovered by outsiders,” Ars’ Dan Goodin wrote. “Google has also been reluctant to actively notify Play users once it learns they were infected by apps promoted and made available by its own service.”

The Ars story mentions one potentially positive change by Google of late: A preventive measure available in Android versions 11 and higher that implements “app hibernation,” which puts apps that have been dormant into a hibernation state that removes their previously granted runtime permissions.

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

AI-Powered CryptoRom Scam Targets Mobile Users

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

Threat Actors Use AWS SSM Agent as a Remote Access Trojan

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains

Cloud Firm Under Scrutiny For Suspected Support of APT Operations

—————

Free Secure Email – Transcom Sigma

Boost Inflight Internet

Transcom Hosting

Transcom Premium Domains